Data

Modernization of Commercial DB to Open-Source DB in AWS

An increasing number of organizations are opting for open-source software due to the greater control it offers. One major benefit of using open-source platforms is that they are often cost-free and don't result in vendor lock-in. Furthermore, utilizing open-source software leads to a reduced overall cost of ownership compared to closed-source and proprietary options.

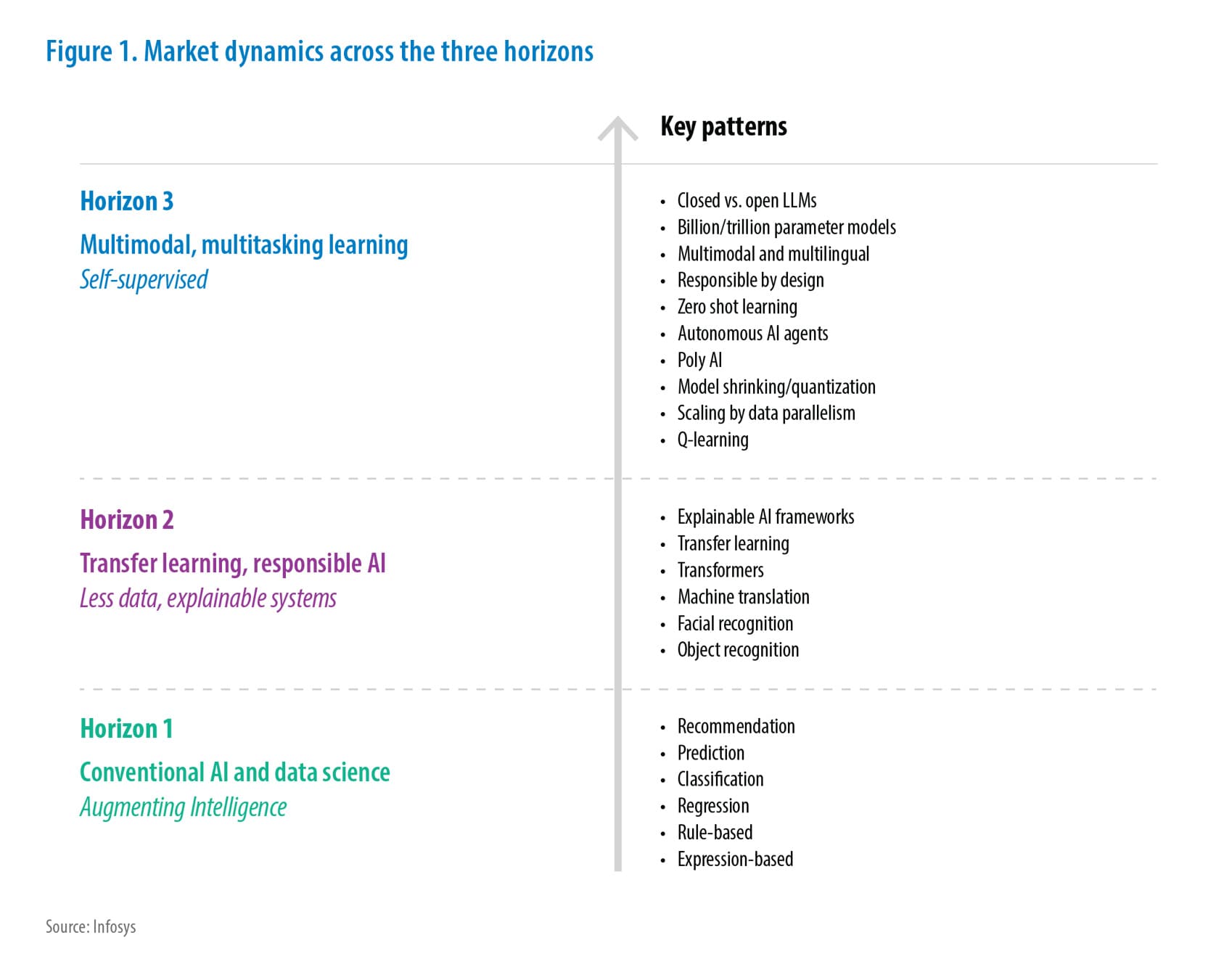

Insights

- As part of the client's ongoing modernization efforts, there is a requirement to eliminate commercial database components to cut down on licensing costs and enhance and improve the sustainability of the application in the long run.

- The database modernization initiative aims to explore ways of converting all commercial databases to open-source alternatives.

- This article presents a comprehensive overview of database modernization and migration to open-source databases on AWS.

The following topics will be discussed:

- The reasons for migrating from commercial databases to AWS RDS open-source databases

- An overview of the various AWS RDS open-source database offerings, including a comparison of their characteristics, pricing models, and the use cases they address

- The phases involved in a typical database migration process

- The migration tools offered by AWS, along with strategies and an analysis of common migration problems

- A guide to migrating to different AWS RDS open-source database offerings

- An examination of the migration journey based on Infosys customer experience using various tools

Why Migrate to AWS RDS Opensource Databases

Businesses that use commercial databases are typically subject to vendor lock-in periods, during which they are unable to switch to another vendor due to the high cost, duration, and complexity of doing so. This results in a high total cost of ownership, including the need to pay for additional features. In contrast, AWS RDS open-source offerings are designed to address these issues, and some features are enabled by default.

Some of the following facts further assert why open-source is the most economical destination:

- Total Cost of Ownership:

- No license fee. Product support is free of cost unless you opt for premium support.

- Up to 70% savings on the overall cost compared to commercial database offerings, excluding the execution cost. You will still have to pay the cost of computing and storage.

- Support: Basic customer support is offered for free, and paid support is also available for a nominal fee. Support costs are lower than those for commercial options.

- Security: Offers strong security features on par with commercial offerings, including column-level encryption and IAM authentication.

AWS RDS Open-Source Databases

Decision Tree

The following diagram provides the high-level decision tree followed to choose the database variant.

Figure 1. Database Decision Tree

Offerings

Amazon’s relational database service is a collection of managed services that makes it simple to set up, operate, and scale databases on the cloud. AWS RDS makes it easy to manage below mentioned open-source database engines. As per client guidelines, the RDS offerings below were considered for this database optimization program.

PostgreSQL: An advanced open-source relational database primarily used for web, mobile, and geospatial applications that deal with analytical data. This has been developed by the open-source community for more than two decades.

MySQL: This is the most popular database (next to Oracle) and used by the most sought-after applications like Netflix, Uber, etc. As it is open-source, it has numerous features developed by the community for more than two decades.

Note: Aurora was not considered as we did not have globally distributed applications or enterprise applications in scope.

Purchasing Model – AWS RDS

- Single AZ - For less critical applications, where the availability requirement is 99.5%

- Multi AZ - Multi AZ deployment was chosen for critical and HA applications, the number of users is high across the region, and apps that require a DR instance can quickly switch in case of AZ failure/outage.

Backup and Disaster Recovery

- Database automatic backups are taken by the database support team as per the business agreement.

- RDS database instances are configured to take point-in-time snapshots every 5 minutes.

- For application servers, EC2 instance snapshots are taken as per RTO and RPO requirements.

- For Single AZ databases in case of AZ failure.

- The latest available snapshot will be restored in a new RDS instance.

- Applications are configured with RTO and RPO of 12-24 hours.

- For Multi AZ databases in case of AZ failure.

- Automated switching of AZ in place without any disruption of service using cloud-native services like lambda functions and cloud watch.

- Switch DNS to point to the DR instance using Route 53 (RTO < 5 hours) for the application component.

Figure 2. DR Strategy for App Servers (AZ-Specific)

Design Considerations for Migration

Following are the key design considerations when we evaluate options for migration:

- Number of database servers and databases

- The acceptable downtime during the migration process – This should help in deciding online vs offline migration methods

- The Database Version an organization would want to retain or open for the latest stable version

- Expected backup redundancy support (zone vs region) and recovery model for business continuity

- Data Residency - Classifying data appropriately and understanding the regulatory requirements and alignment

- Consider the user base location and decide on the nearest region/data centers available in AWS

- Analytics - The rate of growth of data and data capabilities that can be developed

- Overall cost

Database Migration Process

Figure 3. Migration Process

Migration Phases

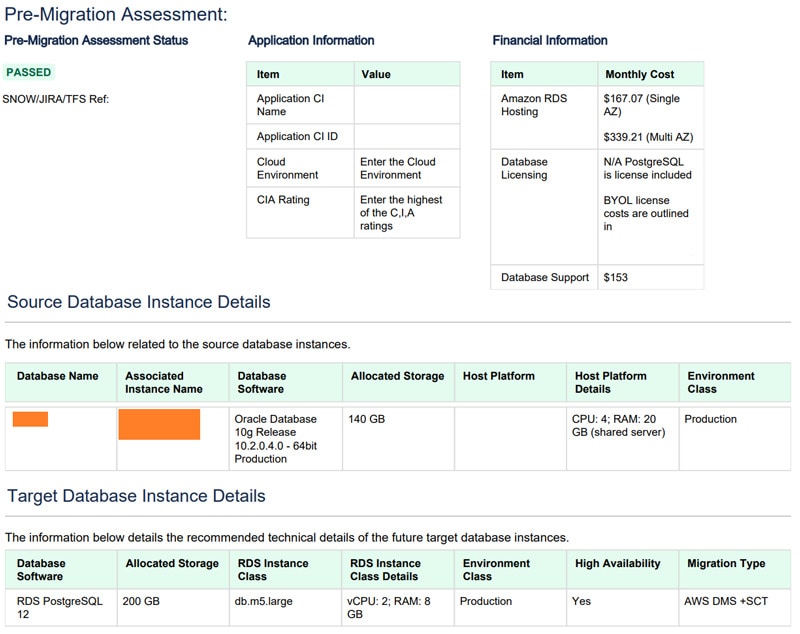

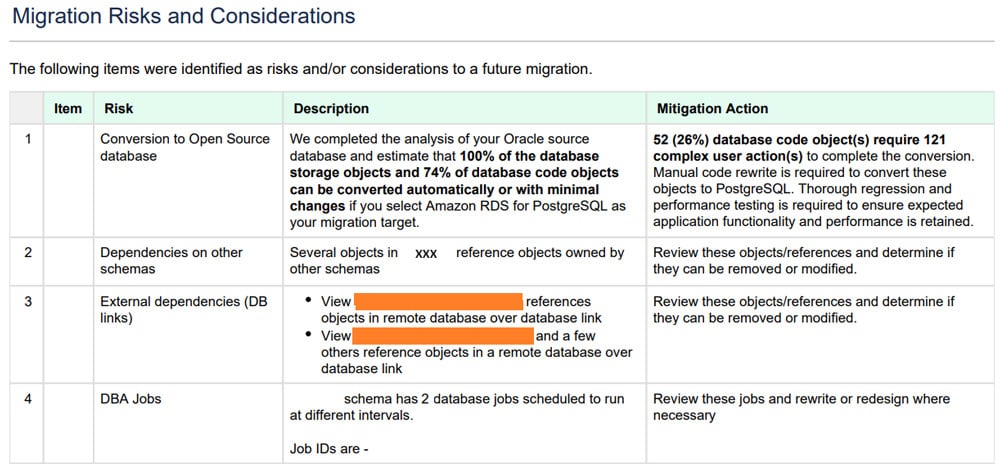

Assessment

- Pre-migration assessment (PMA) report will be created using the discovery tool (AWS SCT).

- PMA report contains the source and target database size, class, etc., details along with other design considerations (database-specific).

Figure 4. PMA Report

Data migration Service (DMS) tool can perform assessments and provide recommendations. It evaluates:

- Database compatibility (breaking changes, deprecated features)

- Unsupported Datatypes

- Migration of LOBS

- Source table with LOBS but without a primary key or unique constraint

- Source table without primary keys for CDC or full load type of migration

- Target tables without primary keys

- Unsupported primary key types

On the other hand, an assessment must also be done on the application code side to evaluate the number of changes to be implemented. We also need to consider the assessment of other dependencies from external or third-party interfaces.

Build - This phase is to build/install and migrate the code and data. AWS Database Migration Service (DMS) will be used for data migration, and the Schema Conversion Tool (SCT) will be used for converting SQL Server/Oracle database code to open-source database code.

Below are the steps involved:

- Identifying the mapping source databases and target databases

- Mapping source datatype and target datatype

- DB objects remediation

- Database changes comparison and documentation

- Database and code impact analysis and review

- Database code changes review

- Converting the schema using AWS SCT

- DB and code remediation

- Migrate data

- Objects and data validation using AWS DMS

The below diagram depicts the purpose of using AWS Schema Conversion Tool (SCT) and Database Migration Service (DMS):

Figure 5. AWS DMS and SCT Migration Process

Post Validation - This phase is to validate the migrated data and perform testing from the database and application.

- Custom scripts to check and compare DB objects. This should involve taking care of database objects like sequences, indexes, etc.

- Creation of external dependencies using open-source native functionalities

- Database changes unit testing

- Performance testing on the open-source database

- Data validation between prod and test

- System and integration testing

UAT Preparation - This will involve production followed by custom checks and balances, which are done during the post validation stage. The migration team will perform basic functional testing of the application to make sure all the pages are loading with the latest data.

Post UAT preparation, cutover to production will be planned and implemented.

AWS RDS Migration Tools and Services – Overview

AWS provides multiple tools for Commercial Database Migration. Each tool has its own capabilities and benefits, as mentioned below:

| Tool | Use of the tool |

|---|---|

| AWS Database Migration Service | It is an AWS-managed service that helps to move the workload to AWS or from AWS RDS Commercial offering to open-source offerings with minimal downtime quickly, securely, and with no data loss. |

| Schema Conversion Tool | It is a replication agent that extracts/copies the data from the source database. It works in conjunction with AWS DMS and can work in the background. This tool makes heterogeneous database migrations easier. |

Reference: AWS Database Migration Guides

Custom Tools and Accelerators

- The custom discovery tool was written in a power shell. On execution, a report will be generated covering below details:

- Server name with IP Address

- Server specs (CPU, RAM, etc.)

- Storage details

- Listening to TCP and UDP ports

- Software installed

- A custom cloud formation template was written, which would take EC2 snapshots every 15 minutes (for highly critical applications), and these were monitored using cloud watch. An alarm would be generated if any snapshot activity was missed, and a red alert mail would be sent to the ops team.

Migration Methods

AWS Database Migration Service (DMS) will support the below types of migration.

Full Load – Full load with AWS DMS migrated all the data from the source database to the target database, but it doesn’t replicate the changes in data.

Full Load + CDC (Change Data Capture) – Full load with AWS DMS migrated all the data from the source database to the target database at the start and replicated subsequent changes at the source, too. It will monitor the database while the task is in progress. This is especially good for very large heterogeneous databases and does not pause workloads.

CDC Only – The CDC will only replicate the changes that have happened in the database, not the initial full load of data. This option is suitable when you are using other methods to transfer your database but still sync with ongoing changes at source.

Migration Best Practices and Lessons Learnt

Best Practices

- As part of the best practices, first understand the source environment, the complexity of the source environment, and the various dependencies available in the source environment.

- For individual applications, please prepare the point of contact or SME application owner details who will provide the technical build document/technical details for the applications and DBs.

- Check parameters for your source environment like legacy features, compatibility issues and downtime, and size of the DB, backup job run time, and planned change history of the last few months, and maintain the checklists in a shared secure repository.

- Opt for reserved instances since the applications are going to be used for the longer term.

- Prepare the end-to-end documentation for your migration and capture the screenshots of the performed steps and issues encountered during the migration.

- Ensure required monitoring services are enabled.

- Configure events, tasks, and notifications

- Configure task log to analyze and fix migration issues

Lessons Learnt

- Ensure good network bandwidth is available between source and target for data movement. This plays the most crucial part, where ideally less downtime will be available for business-critical applications.

- Always check the permissions of the user accounts in the source databases. We came across many databases where sysadmin permissions were provided. However, this is not encouraged in RDS instances. The least privilege principle is always recommended. To remediate this, the team had to scan through the database objects and check if sysadmin privileges were really required or not.

- Always run a proof of concept before going to execute.

- Follow the iterative process of the migration wave and try to overcome the migration blockers.

Limitations of Open-Source

- Open-source databases are not as familiar as commercial databases, though they provide similar features.

- Adaptability – Since this is a different database engine, it will take some time for the ops/BAU teams to get used to it.

- The basic database support is not associated with any organization. We have to go through the community threads for any issues.

- Need to have detailed discussions with the stakeholders and convince them of the features and cost savings without impacting the application.

Apart from the above-recommended practices, below are the additional parameters the need to be considered.

- Understand the migration cost

- Creating the tasks:

- Selecting Migration types: Full load, CDC, Full load + CDC

- Target Table preparation mode

- Controlling migration of large objects (LOBS)

- Capturing and Monitoring AWS DMS tasks using:

- Host Metrics

- Replication Task Metrics

- Table Metrics

- Performance Expectations

- Load multiple tables in parallel

- Use multiple tasks

- Improving LOB performance

- Optimizing change processing

- Use task log to troubleshoot migration issues

Customer Success Story

An energy client based in the US was undergoing modernization and cloud transformation, with multiple critical applications being migrated from on-premises to AWS. The client decided to modernize their commercial databases to open-source databases to eliminate commercial database components and reduce license costs.

In the first phase of modernization, about 25 databases were considered as candidates for database transformation to open-source. Keeping the same compute in the target and adding 20% extra storage resulted in approximately 1 million USD in savings per year.

Challenges:

- Convincing multiple stakeholders of the database transformation to open-source.

- The different open-source database engines made complete automatic conversion of objects impossible, requiring manual remediation.

- Impact analysis on the front end of the application and external systems.

- Identification of database-specific native features in commercial databases.

Solution:

- Multiple meetings were arranged with stakeholders along with application ops/BAU teams.

- Design the target architecture of the application and get it reviewed by the client architecture team.

- DMS tool was leveraged to scan, generate a report, migrate data, and determine suitable remediations.

- SCT tool was used to convert the database schema.

Benefits:

- The database is fully managed, eliminating the need to worry about underlying infrastructure.

- No license costs were incurred, resulting in a lower total cost of ownership.

- Automatic and rapid scaling was made possible whenever traffic flowed in.

- Affordability was increased due to lower operating costs that were only charged during usage periods, allowing the database to be paused when not in use and resumed when needed.

References

- AWS Database Migration Guides

- AWS RDS PostgreSQL

- AWS RDS MySQL

- AWS RDS Migration Open Source

- Database Migration Step-by-Step Walkthroughs

- Database Migrations

- Limitations of MySQL

Subscribe

To keep yourself updated on the latest technology and industry trends subscribe to the Infosys Knowledge Institute's publications

Count me in!