Artificial Intelligence

Integration Fabric - The Future of APIs with Model Context Protocol

This whitepaper explores how Model Context Protocol (MCP) transforms traditional APIs into intelligent, context-aware ecosystems. MCP complements—not replaces—APIs, enabling adaptive reasoning, tool integration, and agentic workflows. Part of the Integration Fabric series, it offers insights on MCP architecture and enterprise adoption strategies for an AI-first world.

Insights

- As part of enterprise digital and AI transformation, organizations are moving beyond traditional APIs to build an AI Integration Fabric that enables context‑aware, intelligent, and autonomous interactions powered by Large Language Models and agentic systems.

- The paper explores emerging agent communication protocols including Model Context Protocol (MCP), Agent Communication Protocol (ACP), Agent‑to‑Agent (A2A), and Agent Payment Protocol (AP2), which together form a new semantic, agent‑ready integration layer.

- It provides a deep dive into MCP, detailing its core components, server and client concepts, and how it complements existing APIs to enable scalable, secure, and modular agentic workflows.

- Through real‑world enterprise use cases and industry tools, the white paper offers practical guidance on how organizations can modernize integrations, scale adoption, and future‑proof their technology landscape for collaborative AI agents.

- API modernization becomes essential for AI readiness—APIs must evolve with machine‑consumable semantics, introspection capabilities, deterministic behavior, and security primitives so that LLM‑driven agents can interact reliably, safely, and autonomously at scale.

Introduction

The rapid evolution of artificial intelligence—particularly with the emergence of Large Language Models (LLMs) like GPT, Claude, and Gemini—has triggered a foundational shift in how software components interact, collaborate, and execute tasks. Traditional APIs, designed for predictable, request-response interactions, are effective for transactional tasks but lack the flexibility required for intelligent, adaptive, and collaborative functions now enabled by LLMs and autonomous agents.

In an AI-first world, the expectation from digital systems is no longer just data retrieval or CRUD operations. Instead, we are moving towards intelligent systems capable of understanding context, dynamically discovering tools, coordinating with other agents, and making autonomous decisions on behalf of users.

This whitepaper explores the evolution of API communication from its early days of machine-to-machine data transfer to the emerging paradigm of agent-to-agent collaboration powered by Model Context Protocol (MCP), Agent Communication Protocol (ACP), and A2A (Agent-to-Agent). These protocols are designed to encapsulate not just data, but intent, context, memory, and adaptive behavior, thereby enabling a much more powerful interaction model between machines.

This whitepaper is intended for technology strategists, enterprise architects, platform engineers, and innovation leaders looking to understand how to future-proof their systems for agentic computing. It provides both a conceptual foundation and a practical guide for adopting next-generation AI protocols and building intelligent, context-aware, and collaborative systems of the future.

Traditional APIs and Their Limitations

Historically, APIs (such as REST, SOAP, or GraphQL) were sufficient for:

- Structured, synchronous data exchange

- Stateless interactions

- Defined, versioned schemas

- CRUD operations over HTTP

However, as LLMs began to orchestrate tools, interpret user goals, and manage workflows, these constraints became apparent:

- Lack of memory: No continuity between API calls or awareness of prior context

- No reasoning layer: Cannot support planning, negotiation, or decision-making

- Static binding: Rigid integrations with tools/services

- Minimal expressiveness: Limited to predefined parameters and routes

This meant that even though LLMs could “think,” they were essentially boxed in by outdated communication constructs.

At Infosys we are helping customers to create AI Integration Fabric.

Modernize the Existing Legacy APIs By Using the Following Levers:

- Machine-Consumable Metadata

APIs must expose structured, self-describing metadata that removes ambiguity and enables seamless machine interpretation—eliminating the need for manual guesswork. - Intelligent Error Semantics

Errors should be rich in context and structured in a way that allows AI agents to understand failure modes and initiate corrective actions autonomously. - Full Introspection Capabilities

APIs should support deep introspection, allowing autonomous systems to discover available operations, data models, and interaction patterns without human intervention. - Predictive Naming Conventions

Consistent and logical naming patterns empower AI agents to infer functionality and relationships, enhancing discoverability and reducing training overhead. - Deterministic Behavior

APIs must behave predictably under defined conditions, fostering trust and reliability in automated workflows and decision-making systems. - Agent-Oriented Documentation

Documentation should be structured not just for developers, but for AI agents—providing semantic clarity, usage patterns, and behavioral expectations. - Performance-Grade Responsiveness

Speed and reliability are foundational for AI-driven systems that depend on real-time data exchange and uninterrupted execution. - Built-In Discoverability

APIs should be easily discoverable through registries, metadata, and semantic descriptors—unlocking new integration pathways for autonomous agents and systems. - Workload & Performance Scaling

Multi-agent systems can dramatically increase API traffic due to parallel tool calls, chain of thought workflows, and autonomous retries. Legacy APIs must be modernized with:- Horizontal scalability (autoscaling, load-balancing, multi-node distribution)

- Higher concurrency limits to support agent fan out patterns

- Rate limiting + backoff strategies to prevent overload

- Caching layers (response caching, semantic caching) to reduce repeated calls

- Async/non-blocking execution models to handle bursty agent workloads

These measures ensure APIs can sustain the increased load generated by autonomous agentic interactions without degradation or failure.

- Security and Trust Levers

Modernization efforts must include strong security primitives—such as secure authentication propagation, signature verification, and auditable access policies—to ensure that machine-driven interactions remain trustworthy, compliant, and enterprise‑grade.

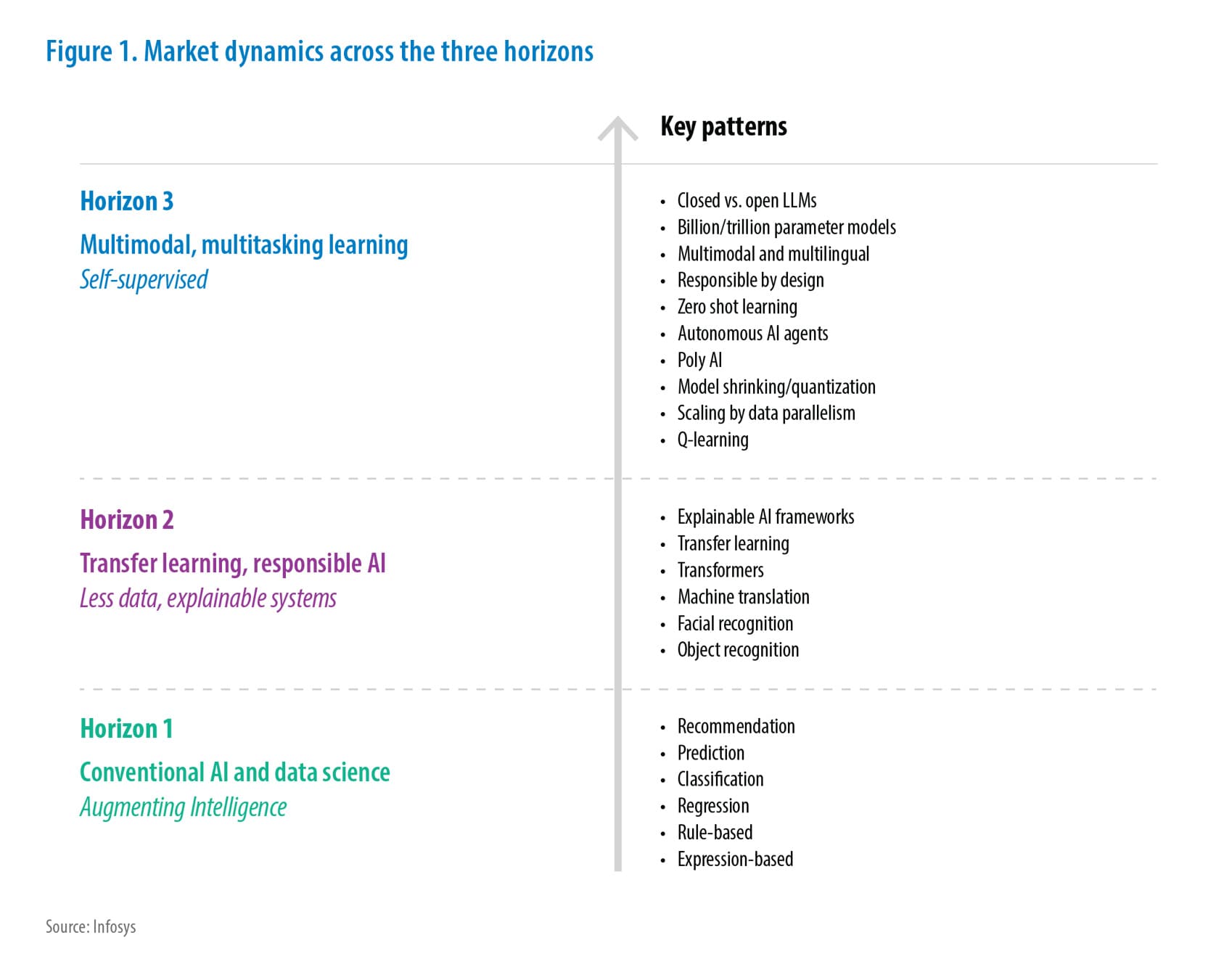

Evolution of AI/LLM-Related Communication Protocols

As artificial intelligence systems, particularly Large Language Models (LLMs)—have advanced from static predictors to dynamic agents capable of understanding and interacting with complex tasks, the need for new communication protocols has become paramount. Traditional API-based communication was built for deterministic interactions: client requests a resource; server responds with data. But LLM-driven systems are inherently contextual, stateful, goal-oriented, and often collaborative — requiring a new paradigm of interaction far beyond static endpoints and payloads.

Emergence of New Protocols:

Rise of Agentic Communication

To empower LLMs as autonomous agents — capable of executing multi-step reasoning, invoking tools, and collaborating with peers—new forms of communication had to emerge that support:

- Dynamic tool discovery

- Context-aware intent propagation

- Memory persistence and traceability

- Role-based agent interactions

- Recursive planning and goal decomposition

This shift gave birth to a new category of protocols, often referred to as Agentic Communication Protocols, which differ fundamentally from RESTful interfaces.

Key Design Shifts in Modern Protocols:

| Dimension | Traditional APIs | Agentic Protocols (LLM/AI) |

|---|---|---|

| Interaction Model | Request–Response | Intent-Based, Multi-Turn, Contextual |

| Session Management | Stateless | Stateful, with embedded memory |

| Endpoint Discovery | Static, pre-integrated | Dynamic, On-the-Fly Discovery |

| Payload Semantics | Structured Data | Intent + Context + Instruction |

| Collaboration | One-to-One | Many-to-Many (Agent-to-Agent, Tool-Orchestrated) |

| Execution Style | Deterministic | Reasoned, Adaptive, Goal-Oriented |

Early Protocols and Language Binding Attempts

Before agent-specific protocols matured, some early efforts tried to bend existing tools:

- LangChain / Semantic Kernel: Tried to manage tool usage and planning using code libraries

- Function Calling APIs (OpenAI, Anthropic): Introduced structured function calling in JSON, enabling limited tool invocation

- GraphQL + AI layer: Enabled flexible queries, but lacked reasoning capabilities

These early frameworks were valuable steppingstones, but they did not introduce protocol‑level abstractions—meaning they could orchestrate tools inside a single application, but could not support standardized, interoperable communication between multiple autonomous agents. As a result, they were unable to scale to true multi‑agent ecosystems, which require shared schemas, message semantics, and service‑level contracts rather than library‑specific bindings.

Emergence of Purpose-Built Agent Protocols

The following protocols are now emerging as industry standards:

A. MCP – Model Context Protocol

- Standardizes how context (task history, goals, memory) is embedded, transferred, and retrieved by LLMs.

- Enables model-driven decision making using environmental and historical cues.

B. ACP – Agent Communication Protocol

ACP defines the formal message of semantics for structured agent interactions.

It focuses on how agents express intent, describe actions, reference tools, exchange structured messages, and maintain conversational state.

Core characteristics:

- Provides schema, syntax, and semantics for agent‑to‑agent messaging

- Establishes roles, delegation patterns, and workflow orchestration

ACP is essentially the rules and structure of communication — not the topology or the collaboration model.

C. A2A – Agent‑to‑Agent Protocol

A2A defines the decentralized collaboration model between autonomous agents.

It emphasizes peer-level behavior rather than message structure.

Core characteristics:

- Enables peer‑to‑peer negotiation, coordination, and capability exchange

- Allows agents to advertise skills, form temporary coalitions, and work together dynamically

- Works without centralized orchestration or hierarchy

While ACP defines how messages are structured, A2A defines how agents relate to each other in a networked environment. In simple terms, ACP governs the language agents speak, while A2A governs the way agents interact and collaborate using that language.

D. AP2 – Agent to Payment Protocol

- Open standard for secure agent-led payments across platforms.

- Extends A2A and MCP to enable payment-agnostic transactions between users, merchants, and providers.

E. AGUI – Agent User Interaction Protocol

As agents become stateful and tool‑driven, the UI must stay tightly synchronized with agent actions in real time — which is why UI synchronization now requires a formal protocol. A dedicated protocol is necessary because traditional UI update mechanisms cannot reliably reflect rapid, multi‑step agent actions or tool calls, leading to inconsistency, race conditions, or loss of state.

- Lightweight protocol streaming JSON events over HTTP or binary channels.

- Synchronizes messages, tool calls, and state between agent backend and UI in real time.

Together, these form a layered communication stack, like OSI models, but optimized for semantic, goal-driven exchanges.

Inspirations from Multi-Agent Systems (MAS)

Interestingly, these ideas are not entirely new. Multi-Agent Systems (MAS) from academic AI research proposed frameworks like:

- FIPA ACL (Agent Communication Language): For sending "inform", "request", "propose" messages.

- Belief-Desire-Intention (BDI) Models: For modelling agent behavior.

However, with modern LLMs, we now have the reasoning substrate to make these frameworks practical and useful at scale.

While MAS concepts inspire parts of today’s agentic ecosystem, modern protocols such as MCP, ACP, and A2A do not directly inherit or implement FIPA‑ACL. They borrow high‑level ideas (e.g., intent expression, role‑based communication), but their semantics, message structures, safety boundaries, and runtime designs are fundamentally new and tailored for LLM‑driven systems.

Convergence of Protocols with LLM Ecosystems

Agent communication protocols are now being actively integrated into open-source and enterprise ecosystems:

- LangGraph: Introduces graph-based agent state flows with memory and tool calls.

- CrewAI, Autogen, Phidata: Build LLM agents with defined roles using MCP/ACP abstractions.

- OpenAgents, AutoGPT, Voyager: Show early experiments in autonomous task planning using these concepts.

These implementations demonstrate that protocols are no longer optional — they are foundational to building reliable, reusable, intelligent agent systems.

Future Outlook

As LLMs evolve to become long-running, memory-augmented, tool-rich agents:

- Communication will resemble semantic collaboration rather than function calls

- Inter-agent communication will become self-describing, secure, and possibly trust-aware

- Industry standards will emerge, like REST/OpenAPI for traditional APIs

- Developer tooling (agent debuggers, simulation engines, protocol validators) will flourish

While MCP is rapidly stabilizing, related protocols such as ACP and AP2 are still in active evolution and not yet fully standardized. Their specifications, governance structures, and interoperability patterns are expected to mature over the next several cycles. Enterprises should view them as emerging standards, not finalized ones.

We are entering a world where agents are not just endpoints, but collaborators — and this demands a new, intelligent language for communication.

MCP – Model Context Protocol

As LLMs evolve to become long‑running, memory‑augmented, tool‑rich agents:

- Communication will resemble semantic collaboration rather than simple function calls

- Inter‑agent communication will become self‑describing, secure, and eventually trust‑aware

- Industry standards will emerge, similar to how REST/OpenAPI standardized traditional APIs

- Developer tooling (agent debuggers, simulation engines, protocol validators) will mature rapidly

We are entering a world where agents are no longer just endpoints but active collaborators — and this shift requires a new, intelligent language for communication.

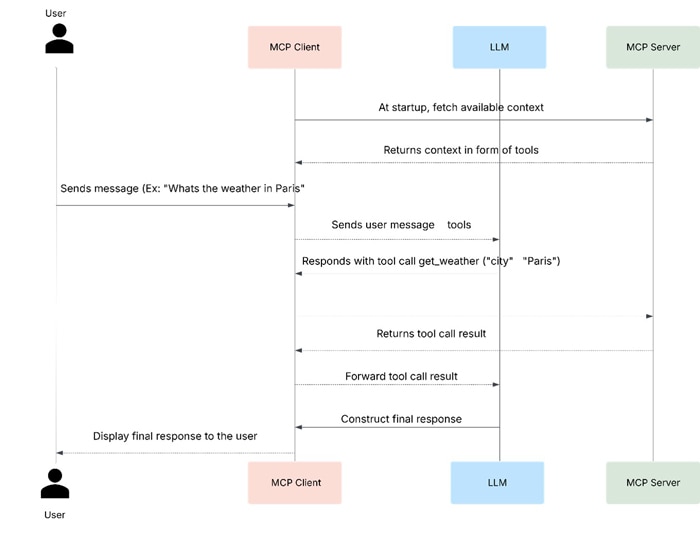

Model Context Protocol (MCP) is an open standard that enables applications to provide structured context to Large Language Models (LLMs). Like USB‑C for devices, MCP standardizes how AI models connect to data sources and tools, allowing seamless integration and portability of context across applications.

A key architectural distinction is that APIs contain and execute business logic, whereas MCP exposes a model‑friendly semantic layer on top of those APIs. MCP does not replace or reinterpret the underlying logic; rather, it wraps existing capabilities in a consistent structure that LLMs can understand, invoke, and reason over.

Key Benefits

- Pre-built and custom integrations for AI applications

- Open, free‑to‑use protocol

- Maintains contextual continuity for reasoning and workflows

Unlike traditional APIs, MCP handles dynamic information — conversation history, environment state, intent metadata — allowing LLMs to adapt and act intelligently in real-world scenarios. The logic still runs through traditional APIs or services; MCP simply provides an LLM‑native interface that semantically exposes those capabilities.

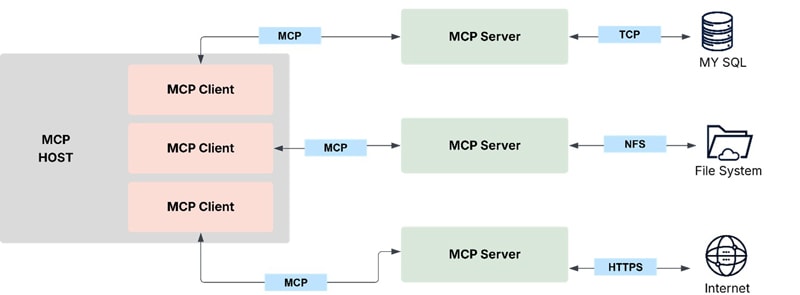

A. Core Components:

- LLM: Interprets user input and context

- MCP Host: Runtime environment (e.g., chatbot) connecting LLM to MCP clients

- MCP Client: Maintains 1:1 connection with MCP servers and relays context

- MCP Server: Provides context in a standardized format (local or remote)

- Context Types: Tools, resources, prompts, and sampling

Example: Visual Studio Code acts as an MCP host, connecting to multiple MCP servers (e.g., Sentry, filesystem) via separate MCP clients.

Note that MCP server refers to the program that serves context data, regardless of where it runs. MCP servers can execute locally or remotely. For example, when Claude Desktop launches the filesystem server, the server runs locally on the same machine because it uses STDIO transport. This is commonly referred to as a “local” MCP server. The official Sentry MCP server runs on the Sentry platform and uses Stream able HTTP transport. This is commonly referred to as a “remote” MCP server.

The STDIO transport is intended primarily for local development and desktop integrations, where the MCP server runs on the same machine. For distributed or enterprise‑grade deployments, Streamable HTTP is the recommended transport, enabling remote execution, scalability, service reliability, and secure cloud integration.

B. Server Concepts:

MCP servers expose domain-specific capabilities to AI applications via standardized interfaces. Examples include:

- File System Server: Document management

- Email Server: Message handling

- Travel Server: Trip planning

- Database Server: Data queries

Servers operate through three core building blocks: Tools, Resources, and Prompts.

Transport Consideration:

Most production deployments use Streamable HTTP for MCP servers to support remote access and distributed scaling. STDIO should be used only for local or single‑machine scenarios.

| Building Block | Purpose | Who Controls It | Real‑World Example |

|---|---|---|---|

| Tools | For AI actions | Model‑controlled | Search flights, send messages, create calendar events |

| Resources | For context data | Application‑controlled | Documents, calendars, emails, weather data |

| Prompts | For interaction templates | User‑controlled | “Plan a vacation”, “Summarize my meetings”, “Draft an email” |

Servers provide functionality through three building blocks:

Tools – AI Actions

- Purpose: Allow LLMs to perform actions via server-implemented functions.

- Definition: Schema-based interfaces validated with JSON Schema.

- Operations:

- tools/list → Discover available tools

- tools/call → Execute a specific tool

- Example:

- searchFlights(origin, destination, date)

- createCalendarEvent(title, startDate, endDate)

- sendEmail(to, subject, body)

- Control:

- Requires explicit user approval for every execution.

- Tools cannot auto‑execute under any circumstances. MCP enforces a strict safety boundary in which every tool call must be explicitly mediated and approved by the client, ensuring that LLMs cannot trigger actions without human oversight.

- For state‑changing operations (e.g., updates, write actions, calendar modifications), MCP servers should implement idempotent behavior or provide idempotency keys to prevent duplicate or unintended changes when an LLM retries or re‑plans an action.

- User Interaction:

- Tools are model-discoverable but human oversight is mandatory.

- Applications must show available tools, provide approval dialogs, and maintain activity logs.

- Client mediation ensures that unsafe, ambiguous, or injected prompts cannot directly trigger tool operations.

Resources - Context Data

Resources provide structured access to external information that the host application can retrieve and supply to AI models as context.

Overview

- Expose data from files, APIs, databases, or other sources for contextual understanding.

- Identified via URIs (e.g., file:///path/to/document.md) and declare MIME types for content handling.

- Support two discovery patterns:

- Direct Resources: Fixed URIs for static data.

- Resource Templates: Parameterized URIs for dynamic queries (e.g., travel://activities/{city}/{category} → travel://activities/barcelona/museums).

- Resource templates must be designed carefully to avoid leaking sensitive filesystem paths, directory structures, or internal network identifiers, which is particularly important in enterprise environments.

- To maintain contextual consistency and support safe retries, MCP servers should expose version identifiers (e.g., timestamps, etags, content hashes) so LLMs and hosts can reliably detect changes, avoid stale reads, and handle conflict resolution.

Protocol Operations

- resources/list → List available resources

- resources/templates/list → Discover resource templates

- resources/read → Retrieve resource contents

- resources/subscribe → Monitor resource changes

Example

- Calendar Data: calendar://events/2024

- Travel Documents: file:///Documents/Travel/passport.pdf

- Previous Itineraries: trips://history/barcelona-2023

Resource templates enable flexible queries, e.g.:

JSON

{

"uriTemplate": "weather://forecast/{city}/{date}",

"name": "weather-forecast",

"title": "Weather Forecast",

"description": "Get weather forecast for any city and date",

"mimeType": "application/json"

}

User Interaction Model

- Application-driven: Hosts decide how to retrieve and present resources.

- Common patterns:

- Tree/list views for browsing

- Search and filter interfaces

- Smart suggestions based on conversation context

- Manual or bulk selection for context inclusion

Prompts – Interaction Templates

Prompts provide reusable, structured templates for common tasks, enabling consistent workflows and reducing reliance on unstructured natural language input.

Overview

- Define expected inputs and interaction patterns for specific tasks.

- User-controlled: Prompts require explicit invocation, not automatic triggering.

- Can reference resources and tools for context-aware workflows.

- Support parameter completion for easier argument discovery.

Protocol Operations

- prompts/list → Discover available prompts

- prompts/get → Retrieve full prompt definition with arguments

Example

Plan a Vacation Prompt:

JSON

{

"name": "plan-vacation",

"title": "Plan a vacation",

"description": "Guide through vacation planning process",

"arguments": [

{ "name": "destination", "type": "string", "required": true },

{ "name": "duration", "type": "number", "description": "days" },

{ "name": "budget", "type": "number" },

{ "name": "interests", "type": "array", "items": { "type": "string" } }

]

}

Workflow:

- Select “Plan a vacation” template

- Provide structured input: Barcelona, 7 days, $3000, [“beaches”, “architecture”, “food”]

- Execute consistent workflow based on template

Error Handling Consistency: MCP servers should return structured, JSON‑schema‑aligned error formats for both tool calls and resource operations. Consistent error semantics allow LLMs to interpret failure modes, retry intelligently, request clarification from users, or adjust plans without producing hallucinated assumptions.

For enterprise deployments, adopting a predictable error schema (e.g., standardized error codes, messages, and remediation hints) is essential for reliability, auditability, and safe automation.

User Interaction Model

- Prompts exposed via:

- Slash commands (e.g., /plan-vacation)

- Command palettes for searchable access

- UI buttons for frequent tasks

- Context menus suggesting relevant prompts

- Key principles: Easy discovery, clear descriptions, validated input, transparent template display.

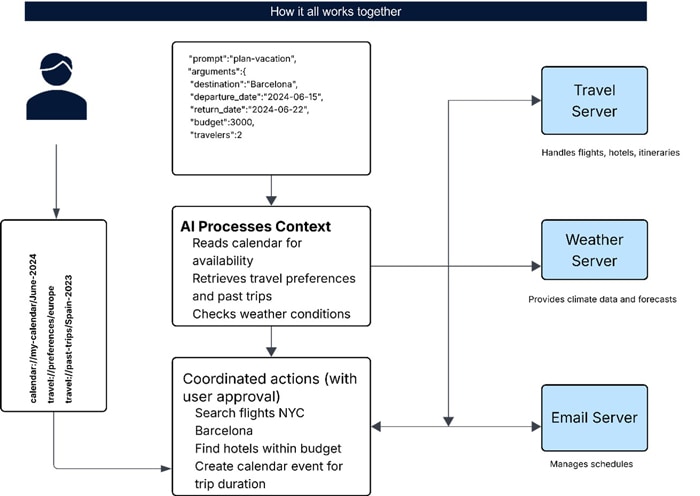

Multi Server Works Together

- User Input → JSON prompt with trip details

- Resource Selection → Calendar, travel preferences, past trips

- AI Context Processing → Reads calendar, retrieves preferences, checks weather

- Servers:

- Travel Server → Flights, hotels, itineraries

- Weather Server → Climate data and forecasts

- Calendar/Email Server → Schedules and communications

- Coordinated Actions → Search flights, find hotels, create calendar events, send confirmations

C. Client Concepts:

Understanding MCP Clients

MCP clients are created by host applications (e.g., Claude.ai, IDEs) to communicate with MCP servers.

- Host vs Client: The host manages the user experience and coordinates multiple clients. Each client handles one direct connection to a server.

- MCP clients form a critical trust boundary, mediating all server requests to protect users from prompt injection, unsafe tool calls, and unauthorized actions.

Core Client Features

Clients enrich interactions by enabling servers to:

- Request additional user input (elicitations)

- Access advanced capabilities like sampling

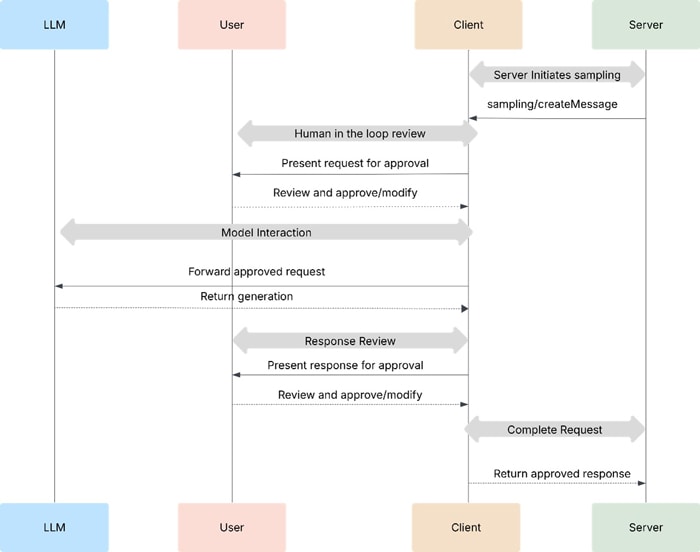

Sampling

Sampling lets servers request AI completions via the client, enabling agentic behaviors while maintaining security and user control.

Why it matters:

- Servers don’t need direct AI model integration or cost handling.

- Clients control permissions, transparency, and isolation of contexts.

- Clients block unsafe or unexpected model prompts, preventing malicious or manipulated tool calls.

Flow:

- Server requests AI help (e.g., analyze flight options).

- Client asks user for approval.

- AI processes request → user reviews response → result sent back to server.

Security:

- Human-in-the-loop approval

- Transparency of prompts and model choices

- Isolation from main conversation context

Example: Flight Analysis Tool

A travel server tool (findBestFlight) uses sampling to evaluate 47 flight options based on user preferences. The client mediates AI analysis and ensures user consent before returning recommendations.

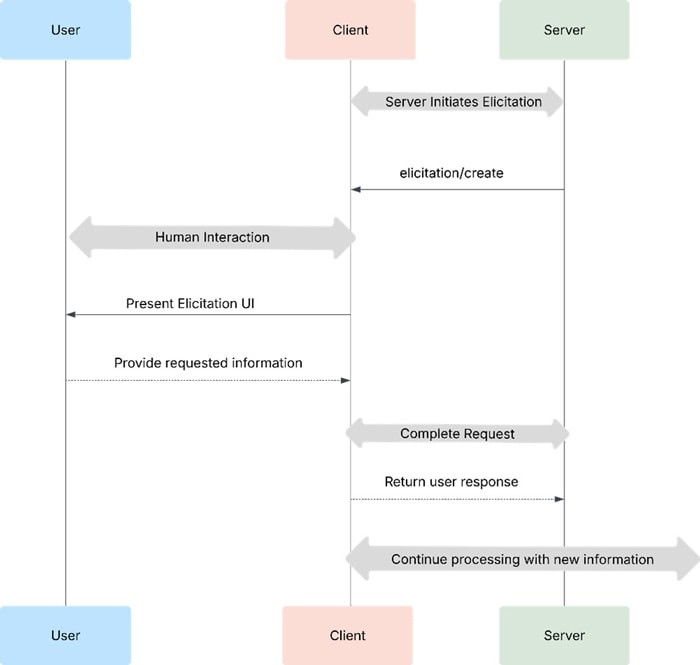

Elicitation

Elicitation enables servers to request specific information from users dynamically, making workflows more adaptive and interactive.

Overview

- Instead of requiring all data upfront, servers can pause and request missing details.

- This creates flexible, user-driven interactions rather than rigid processes.

Flow

- Server initiates elicitation (elicitation/requestInput).

- Client presents UI with context and schema.

- User provides input or declines.

- Client validates and returns response to server.

- Server continues processing with new data.

Example: Holiday Booking

Before finalizing a Barcelona trip, the server elicits:

- Booking confirmation

- Seat and room preferences

- Optional travel insurance

User Interaction

- Clear context: Why info is needed and how it’s used.

- Response options: Provide info, decline, or cancel.

- Privacy: No sensitive data like passwords; client validates and warns about suspicious requests.

- Safety: Client blocks malformed schemas or attempts at prompt injection that could lead to unsafe tool invocation

D. Does MCP Replace APIs?

No. MCP does not replace APIs; it complements them. MCP serves as a context‑orchestration interface, not an execution or runtime layer. Its role is to standardize how LLMs access tools, resources, and prompts — but it does not execute business logic, host services, or replace the underlying API infrastructure.

To illustrate, let’s walk through a simple example of MCP in action. Suppose a user asks a chatbot a question requiring external context beyond the model’s training data, such as: “What’s the weather in San Francisco today?”

The MCP client first ensures it has up‑to‑date tool definitions from connected MCP servers. It injects the get_weather tool definition into the conversation context before sending everything to the LLM. The LLM recognizes it needs external data to answer the query and invokes:

get_weather(city="San Francisco")

The MCP client routes this tool call to the appropriate server. The server performs the actual logic — which is simply calling a traditional weather API — and returns the result. MCP’s involvement ends at orchestrating the tool invocation and the context surrounding it.

The returned data is passed back to the LLM, which uses it to generate the final response for the user.

Notably, the underlying computation here is still a standard API call. This highlights the key point: MCP does not replace APIs. It provides an LLM‑friendly interface and consistent context‑management layer on top of existing APIs, without altering how those APIs execute.

E. MCP for agentic workflows:

A raw LLM maps inputs to outputs. An agentic LLM system adds:

- Tools to act

- Memory of past steps

- Iterative reasoning

- Goals or tasks

When an LLM can choose tools, reflect on outcomes, and plan next steps, it becomes agentic.

Role of MCP

MCP enhances this by:

- Providing context beyond tools, including parameterized prompts for chaining instructions.

- Surfacing related tools dynamically, avoiding bloated prompts and rigid workflows.

- Enabling modular, adaptive systems where LLM explores paths without deterministic constraints.

- MCP does not enforce or run the agent loop. It only provides structured context and tool interfaces.

- The host application (the MCP client) is responsible for orchestrating the reasoning loops, managing iterations, approving tool calls, and determining when the workflow ends. MCP is a contextual and semantic layer—not an agent at runtime.

Agentic Architecture

An agent emerges from:

- LLM – reasoning and decision-making

- MCP servers – tools + dynamic prompts

- MCP client – orchestration and loop management

- User – goal provider

Example Workflow

Goal: “I want to focus on deep work today.”

- MCP client packages user goal + server context → sends to LLM.

- LLM selects plan_daily_tasks from Todo Server → returns prompt to break down tasks.

- LLM adds tasks, then invokes Calendar Server via schedule_todo_task.

- Calendar Server suggests schedule_event → LLM finalizes plan.

Throughout this process, the MCP client manages the reasoning loop—deciding when to re-prompt the LLM, when to approve or reject actions, and how state evolves—while MCP provides the structured tools and context that make the workflow possible.

F. MCP Nesting:

MCP servers can also act as clients to other MCP servers, enabling modularity, composition, and delegation—like microservices for agents. This approach decouples tool logic from the agent runtime, creating a composable system of MCP servers that work like Lego blocks.

Example: Orchestrator Server

A dev-scaffolding server can coordinate upstream servers:

- spec-writer → API specs

- code-gen → scaffold code

- test-writer → generate tests

Enterprise‑Aligned Scenarios

1. Data Pipeline Automation

An enterprise may structure its data operations as a chain of MCP servers:

- Ingestion MCP Server → pulls raw data from APIs or warehouses

- Transformation MCP Server → applies business rules, validations, enrichment

- Analytics MCP Server → runs models, dashboards, ML inference

A central Pipeline Orchestrator for MCP Server simply delegates these downstream MCP servers. This mirrors enterprise ETL/ELT systems but with LLM-accessible semantics and composability.

2. Chained API Orchestration for Business Workflows

Large enterprises often rely on multiple back‑office APIs (CRM, ERP, billing, HR). Instead of giving an LLM direct access to all systems, each is exposed as a dedicated MCP server:

- CRM Server → customer profiles, tickets, opportunities

- ERP Server → inventory, supply chain, procurement

- Billing Server → invoices, transactions, payment status

A Workflow MCP Server sits on top, orchestrating a multi-step business process such as “Create an order, check inventory, generate an invoice.” Each server acts as both a client (to downstream MCP servers) and a provider (to the agent), producing a secure, modular chain.

Remote MCP Servers

Most MCP servers today run locally via stdio, requiring manual installation alongside clients. While simple for testing, this limits scalability and interoperability:

- Fragmented ecosystem: Hard to share servers across teams or agents.

- Manual updates: No centralized version control.

- Security gaps: Difficult to enforce policies or audit behavior.

- Poor developer experience: No easy discovery or reuse of tools.

Shift to Remote

Anthropic’ s spec updates introduce Streamable HTTP, enabling stateless remote servers and paving the way for a distributed MCP ecosystem.

This evolution supports scalable, enterprise-grade deployments where MCP servers behave like cloud microservices rather than local plugins.

Remote MCP servers also introduce network dependency and added latency, which must be evaluated against enterprise reliability requirements (e.g., RTO/RPO, failover strategies, and QoS guarantees). High‑availability architectures—load-balancing, retries, regional replicas—become critical when servers are no longer local.

Case Study:

Shipping & Logistics – Real-Time Shipment Tracking and Exception Handling

Scenario:

A global logistics company wants its AI assistant to handle queries like: "Where is my shipment? Can you update the delivery address and check if customs clearance is complete?"

Challenges:

- The LLM needs access to multiple systems:

- Tracking API for real-time location

- Warehouse Management System for inventory status

- Customs Clearance System for compliance updates

- Traditional integrations require hardcoding APIs and workflows, making them rigid and costly to maintain.

Solution leveraging MCP:

- MCP Host: Customer-facing chatbot or operations dashboard

- MCP Clients: Connect to MCP servers for tracking, warehouse, and customs data

- MCP Servers: Expose context as tools (get_shipment_status, update_address), resources (shipment documents), and prompts (structured workflows)

- The LLM dynamically discovers and invokes these tools via MCP, retrieves real-time data, and executes updates without manual intervention.

Business Impact:

- Faster Resolution: Real-time answers reduce query handling time by 30–50%.

- Lower Costs: No hardcoded integrations; maintenance costs drop by 40%.

- Better Customer Experience: Instant updates improve satisfaction and retention.

- Operational Efficiency: Automates multi-system tasks, freeing staff for exceptions.

- Scalable & Flexible: Easily add new tools without workflow rewrites.

- Reduced Errors & Risk: Controlled prompts ensure compliance and accuracy.

Note: These performance ranges reflect typical improvements reported in logistics automation and API modernization benchmarks; actual impact may vary by system complexity and baseline process efficiency.

Industry Tools for Converting APIs to MCP:

| Tool / Platform | Type | Key Features | Language / Tech |

|---|---|---|---|

| openapi-mcp-generator | CLI / SDK | Converts OpenAPI specs to MCP servers; supports OAuth2, Zod validation | TypeScript |

| api-wrapper-mcp | Wrapper | Wraps REST APIs as MCP tools using YAML config; supports Claude integration | Go |

| RapidMCP | Hosted Service | No-code conversion of REST APIs to MCP servers; remote deployment | Cloud-based |

| mcp.run | Platform | Registry + remote execution of MCP servers; dynamic updates | Cloud-based |

| VS Code API-to-MCP | IDE Extension | Scans code for REST APIs and auto-generates MCP wrappers | Node.js |

| Tyk API Gateway | Gateway | Adds MCP interface to existing APIs; centralized auth and observability | Enterprise Gateway |

| Anthropic MCP SDK | SDK | Official SDK for building MCP servers and clients | TypeScript |

Important Note on API → MCP Conversion

While these tools accelerate MCP adoption, conversion is not purely syntactic. Even when OpenAPI specs or REST endpoints are auto‑wrapped, teams must validate:

- Semantic naming correctness (tool names, parameter conventions, capability descriptions)

- Error semantics alignment (HTTP status codes → MCP error types)

- Metadata exposure (field descriptions, constraints, intent cues that LLMs rely on)

- Safety and policy surface (which capabilities should or should not be exposed to agents)

This ensures that the resulting MCP server is not only functional but also usable, safe, and interpretable by LLM-powered agents.

How Can Enterprises Scale Up Integration and Adoption?

As organizations embrace protocols like MCP, A2A, ACP, and AP2, scaling adoption requires a structured approach that balances technical readiness, governance, and ecosystem alignment.

What Next??

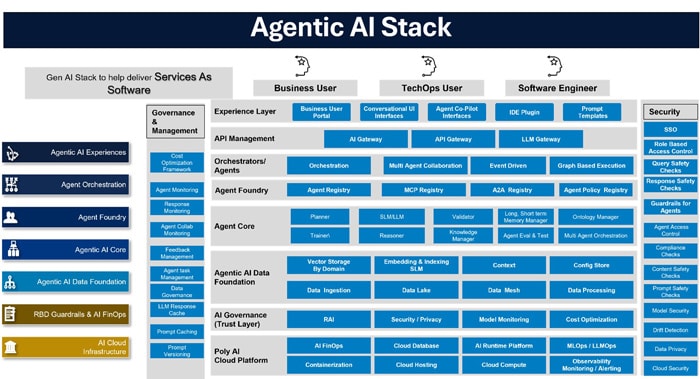

At Infosys, we are building an agentic stack under the Infosys Topaz Fabric, enabling customers to select and integrate models, MCP servers, or custom agents to create enterprise-specific solutions.

We recommend that enterprises undertake a comprehensive assessment of their current integration landscape and define or establish the following:

1. Establish a Strategic Roadmap

- Assess Use Cases: Identify high-impact workflows (e.g., multi-agent collaboration, agentic commerce).

- Prioritize Protocols: Choose MCP for context integration, A2A for agent collaboration, ACP for interoperability, and AP2 for secure payments.

2. Build Modular Architecture

- Protocol Gateways: Implement protocol adapters that wrap existing APIs, enabling gradual migration.

- Microservices Approach: Deploy agents as modular services for easier scaling and orchestration.

3. Leverage Open Standards and SDKs

- Use official SDKs for MCP, A2A, and ACP to accelerate development.

- Adopt Verifiable Credentials (VCs) for AP2 to ensure trust and compliance.

4. Invest in Governance and Security

- Align with Linux Foundation standards for ACP and AP2.

- Implement enterprise-grade security: OAuth, HTTPS, cryptographic signatures.

5. Enable Developer Ecosystem

- Provide internal sandboxes for testing multi-agent workflows.

- Encourage open-source contributions and interoperability testing.

6. Scale Through Automation

- Use orchestration platforms to manage agent discovery, task routing, and lifecycle.

- Integrate monitoring and observability for performance and compliance.

7. Foster Cross-Functional Collaboration

- Involve IT, security, compliance, and business teams early.

- Create training programs for developers and architects on protocol adoption.

8. Manage Protocol and Server Lifecycles

Enterprise-scale adoption requires mature lifecycle management to ensure long-term stability and compatibility.

- Versioning Strategy: Define a clear versioning scheme for MCP servers, tools, and schemas to avoid breaking downstream agents.

- Backward Compatibility: Ensure new protocol versions maintain compatibility with existing agents, clients, and workflows.

- Rolling Server Updates: Adopt blue–green or canary release models for MCP servers to minimize disruption.

- Deprecation Policies: Communicate capability removal timelines and migration paths for downstream teams and agents.

- Schema Evolution: Use formal schema evolution rules to avoid changes that invalidate historical prompts, workflows, or agent memories.

- Version Change Communication: Version updates should be published through a registry, discovery service, or metadata channel, so downstream agents and hosts can automatically detect changes and adjust behavior safely.

This guarantees that MCP‑based systems evolve predictably and remain reliable at enterprise scale.

Forecast:

By the next 2–3 years, agent protocols will become foundational for AI-driven workflows, with AP2 emerging as a critical enabler for trusted agentic commerce.

Conclusion

The future of APIs is evolving from static, request-response interfaces to intelligent, context-aware, and agent-driven ecosystems. Traditional APIs will remain foundational, but their role is shifting—they will serve as the underlying infrastructure while AI-enabled protocols like MCP, A2A, ACP, and AP2 introduce a new layer of intelligence and interoperability.

To stay foundational in an AI‑first world, APIs themselves must expose machine‑consumable semantics—clear metadata, introspection capabilities, deterministic behavior, and predictable structures—to make them fully AI‑ready.

- MCP transforms APIs into dynamic context providers for LLMs, enabling adaptive reasoning and tool integration.

- A2A redefines peer-to-peer communication, allowing autonomous agents to collaborate without bespoke connectors.

- ACP creates a universal communication standard for multi-agent systems, bridging fragmented frameworks.

- AP2 extends this evolution into commerce, embedding trust and accountability into agentic payment flows.

These protocols do not replace APIs—they augment them, making them AI-ready for complex workflows, secure transactions, and cross-platform collaboration. For enterprises, adopting these standards means moving beyond isolated endpoints toward API ecosystems that are intelligent, interoperable, and future proof.

The next generation of APIs will be agent-aware, protocol-driven, and trust-centric, forming the backbone of digital transformation in an era where AI agents orchestrate tasks, decisions, and commerce autonomously.

References

Throughout the preparation of this whitepaper, information and insights were drawn from a range of reputable sources, including research papers, articles, and resources. Some of the key references that informed the content of this whitepaper include:

These references provided the foundation upon which the discussions, insights, and recommendations in this whitepaper were based.

Subscribe

To keep yourself updated on the latest technology and industry trends subscribe to the Infosys Knowledge Institute's publications

Count me in!