-

Nancy Cooke on Human Systems Engineering

20 Jul 2020

-

Addressing human factors in product and system design goes beyond basic functionality and convenience. Nancy Cooke explains how anticipating human behavior and motivation, as well as AI abilities and limitations, should be at the core of decision making behind city planning, product engineering, and the creation of effective models for education and law enforcement.

Hosted by Jeff Kavanaugh, VP and Head of the Infosys Knowledge Institute.

“Humans should have different roles and responsibilities than the robots and the AI. Humans should do what humans do best and the AI and the robots do what the AI robots do best or things that we don't want to do, like dull, dirty, dangerous things.”

- Nancy Cooke

Show Notes

-

00:37

Nancy Cooke, you direct Arizona State University's Center for Human, AI and Robot Teaming. What is it about this that you find especially appealing and important for times like this?

-

01:42

Jeff introduces Nancy

-

02:18

Nancy, that's a pretty awesome list of technology and computer related responsibilities and expertise. What inspired you to pursue this academic path?

-

03:52

Cybersecurity often is at that base level within companies compared to the national security implications or something where people's lives are at risk. What are some of the considerations that you think about to design into these systems?

-

04:25

How do you think about designing for these people though, because you only know of the ones in front of you today. What about the ones for the future or the ones who may not look or think like you do?

-

05:53

What's new and different and novel about human factors today and what you can do compared to when you began your career?

-

07:00

What is it about systems design that you think about or incorporate into human factors in this whole robot human teaming?

-

08:07

What is it about AI and technology being a teammate of a human, what are some characteristics that can make that come more alive for people?

-

09:15

In your address to the Annual Meeting of Human Factors and Ergonomics Society, you spoke of the grand challenges of sustainability, human health, vulnerability, and the joy of living. Given the situation we face today, can you describe these challenges in more detail?

-

11:49

Where in the adoption of this thinking is the broader world?

-

13:14

What do you see needs to happen so that human factors in this kind of thinking is front and center and adopted in mass?

-

14:32

Given the pandemic and given the implications, both business and health and societal, what role can human factors and this idea of teaming with humans and AI? What role can it play now?

-

15:48

Who are some companies or organizations out there that are getting this right?

-

16:15

Why do companies and organizations still get it wrong even though you've got an ergonomic society, you're getting the word out? What is it that they can do to get it right?

-

17:10

Are there rules of thumb, ratios or heuristics that you've seen applied as you advise companies out there?

-

17:56

What are some human factors or organism characteristics that can be applied to or using human factors as maybe you can apply it from nature to an organization or company?

-

19:20

What are some specific technologies that you're excited about that can help advance the cause of human factors engineering?

-

19:59

You once spoke of an inspirational trip to Medellín. For a city that was once the murder capital of the world, what lessons did that trip teach you about societal transformation?

-

22:15

If you have some young person who is looking to go in this as a career, what are some guidance or some recommendations or counsel you might be able to provide them if they think about this as a career, or maybe doing some of these things and incorporate them into their profession, whatever they do?

-

25:24

What's been your most interesting experience and learning from that?

Jeff Kavanaugh: What's past is prologue. In the march on Washington in 1963, Martin Luther King Jr. described a “fierce urgency of now.” He said, "This is a time for vigorous and positive action." And today's fierce urgency of now, global pandemic has exposed the frailties and failures of multiple systems from health to finance, economics to energy and politics to education. With the world economic forum calling for a great reset, we find ourselves at a crossroads balancing short term pressures with long term uncertainty. And once again, this is a time for vigorous and positive action, human systems and global problems. Nancy Cooke , you direct Arizona State University's Center for Human, AI and Robot Teaming. What is it about this that you find especially appealing and important for times like this?

Nancy Cooke: Yes. Well, as you just said, Jeff, I think this is a time to reimagine many of our systems. It is a crossroads and all of these systems have humans involved in them. They're all human systems. And I think this is a good time to reconsider some of our systems. We've talked in our group about the policing system and the need to maybe reconsider policing as a system. It really should involve more of the stakeholders, the community, the people who are in gangs and bring them all together to reimagine the system. And it has to be reimagined with humans at the center.

Jeff Kavanaugh: And human systems and importantly, human systems engineering is we'll be exploring today's conversation. Welcome to the Infosys Knowledge Institute Podcast, where we talk with experts on business trends, deconstruct main ideas and share their insights. I'm Jeff Kavanaugh, head of the Infosys Knowledge Institute. And today we're here with Professor Nancy J. Cooke . Nancy is the Graduate Program Chair in human systems engineering at the Polytechnic School at Arizona State University. She also directs ASU’s Center for Human, AI and Robot Teaming, as well as the Advanced Distributed Learning Department Lab and the Science Director of The Cognitive Engineering Research Institute in Mesa, Arizona. Nancy received her PhD in cognitive psychology from New Mexico State University. And her research interests include individual and team cognition, sensor operator threat detection, cyber intelligence analysis, and human robot teaming. Nancy, thanks for joining us.

Nancy Cooke: Thanks for having me, Jeff.

Jeff Kavanaugh: Nancy, that's a pretty awesome list of technology and computer related responsibilities and expertise. What inspired you to pursue this academic path?

Nancy Cooke: I was interested when I was in undergrad at George Mason University in computer science, but also in psychology. Now I wanted to try to figure out what I could possibly do with both of them. And I went to the dictionary of occupational titles at our career counseling center, put the two together and came up with engineering psychology or human factors and decided to pursue that in my graduate studies. And I ended up with a cognitive psychology PhD, but my advisor Roger Schvaneveldt had always done applied cognitive psychology. So we started out doing a study on what it takes cognitively to be a fighter pilot.

Nancy Cooke: And that got me interested in defense applications. And I think the thing that inspired me the most in my career though, was 9/11. 9/11, I had three young children at home and I came home at the end of the day and thought I've got to do something in my life to keep this from happening. And so ever since then, I've been working in defense applications. And so the center that I run, Center for Human, AI and Robot Teaming is under ASU's Global Security Initiative. So it's all about not just US defense, but global security.

Jeff Kavanaugh: It's amazing how that draws into stark perspect the difference between your email account being hacked and getting a few extra pieces of malware and something that could literally affect the security of a country. When you compare those two, that cybersecurity often is at that base level within companies compared to the national security implications or something where people's lives are at risk. What are some of the considerations that you think about to design into these systems?

Nancy Cooke: Yeah, I think the biggest consideration is to design systems that work with people, for people and not against people. So sometimes in cybersecurity applications, there's almost so much security that it gets in the way. I think we have to carefully engineer these systems with the people who are going to use them front and center.

Jeff Kavanaugh: How do you think about designing for these people though, because you only know of the ones in front of you today. What about the ones for the future or the ones who may not look or think like you do?

Nancy Cooke: That's kind of tricky. Those are envisioned systems and envisioned users of those envisioned systems and the best we have is what we know now and the systems that we know now. And we can certainly try to identify what the barriers to use are and what some of the challenges to using the systems are for people and make sure that in our future systems, we design them out. A good example is the creditor ground control station for the unmanned aerial vehicles that the Air Force uses. And we're stuck with a legacy system now that was fielded years ago in Bosnia. And the human interface to that system is horrible. The users take, and these are usually trained pilots, take about a year and a half to learn the interface, how to fly a UAV, not just the flight itself, but operating the knobs and dials. And if you go into one of their ground control stations, you'll see lots of sticky notes all over the place. Like “don't touch that button.” We're kind of stuck with it because they didn't really consider the human factors early in system development. And now we do work arounds.

Jeff Kavanaugh: That Post-it Note comment reminds me of Apollo 13, you know, “don't press this button or we'll separate the module.” It's interesting you mentioned that because if human factors weren't thought about like this in the past, what's new and different and novel about human factors today and what you can do compared to when you began your career?

Nancy Cooke: I think one of the things that's different is the breadth of human factors. So we were talking at the beginning of this, about social systems. Well, when I started in human factors, it was known for knobs and dials and design of ergonomic chairs. And that's what people pretty much thought we did. And a lot of what we did dealt with human interaction with single machines, like human interaction with a phone, human interaction with a computer. And now we're talking about humans in sociotechnical systems like nuclear power plants, where you have lots of technology and lots of people. And it's much more complex. We need and we are developing many more methods to understand those systems. But when you go from knobs and dials to the policing system or educational systems, it's just a huge transformation.

Jeff Kavanaugh: You know, you used the word system more than once. Well, let's talk about that for a second because systems design is fascinating. We're seeing this pop up all over the place as people try to get their arms around complexity. What is it about systems design that you think about or incorporate into human factors in this whole robot human teaming?

Nancy Cooke: Seeing it as a system. And a lot of my research has been on teams, teams of maybe three to five people. And you can see those as small social systems and you start incorporating technology in it that becomes a larger, more complex system. And so I'm dealing with human, AI robot teaming, and I take the word teaming very seriously. What does it mean to be a team? What does it mean to put AI on the team? Can AI be a teammate? That's a controversial topic. I say, yes, it can just as a dog could be a teammate, maybe a teammate of a different species, and we have to maybe take certain different actions or use different methods because we're dealing with a different species and we shouldn't expect it to be like humans.

Jeff Kavanaugh: That is a fantastic comparison between AI and a dog, because you can clearly visualize whether it's your canine friend up in the Arctic, back in Jack London novels or the canine who is supporting someone in Afghanistan or Iraq as a soldier. If you take that comparison a little further, what is it about AI and technology being a teammate of a human, what are some characteristics that can make that come more alive for people?

Nancy Cooke: Yeah. So I look at what does it mean to be a team? So we define teams as an interdependent group of individuals. We're usually talking about humans who have different roles and responsibilities coming together to work toward a common goal. So there's a couple of things that, that tells me right away about the AI and the robots. And I think this flies in the face of what often people think, but it tells me that humans should have different roles and responsibilities than the robots and the AI. Humans should do what humans do best and the AI and the robots do what the AI robots do best or things that we don't want to do, like dull, dirty, dangerous things.

Nancy Cooke: But too often people are thinking, "Okay, we're just going to reduce humans in this AI who are going to have the AI be as smart as a human, be able to communicate like a human, do the same things that a human does." I think that that's the wrong direction to go because we already know how to reproduce ourselves. We do that really well, and we're not perfect at doing everything. So we need this technology to help us do the things that we can't or don't want to do.

Jeff Kavanaugh: In your address to the Annual Meeting of Human Factors and Ergonomics Society, you spoke of the grand challenges of sustainability, human health, vulnerability, and the joy of living. Given the situation we face today, can you describe these challenges in more detail?

Nancy Cooke: Yeah, so these come from the National Academy of Engineering and the ones that you read are really buckets that 14 challenges really fall into, including things like secure cyberspace, reverse engineer the brain, enhanced virtual reality, engineered tools of scientific discovery. I think these are all big challenges. And to me, they're important challenges for today, especially ones dealing with medicines and engineer better medicines and health informatics. In my address, I brought these up because sometimes people in human factors don't see a place for them in these challenges. And I was making the case that these are, yes, big challenges. They're going to require not just one discipline to solve these challenges. They require multiple disciplines. And usually it's not until you really understand the problem that you see the role for human factors.

Nancy Cooke: I was put on an interesting National Academy of Science Committee on bolts for deep sea installations, like oil rigs and I was on this committee with, I think I was the only woman, only social scientist, a lot of metallurgists, a lot of materials engineers, a lot of people who were in the petroleum industry. And I was wondering, what am I doing here? What do I know about undersea bolts and embrittlement and topics like that, that they were talking about. But the longer I sat in the meetings and kind of understood the problem, the more I saw the role for human factors. And now there's a whole chapter of our report on human factors of bolt installation. And it turns out that every step of the way from the manufacturing of the bolts, to the installation of the bolts and maintenance of the bolts, that there are humans involved in all those steps that really hadn't been given much thought previously.

Jeff Kavanaugh: I'm visualizing someone very deep in the sea trying to turn a wrench and install this massive bolt. And if you don't get it right, there are bad things that are going to happen to that person as well as whatever is being dependent upon that later. So the human factors of getting that right seem to make a lot of sense.

Jeff Kavanaugh: Once again, you're listening to Knowledge Institute where we talk with experts on business trends, deconstruct main ideas and share their insights. We're here with Professor Nancy Cooke , expert in human systems, AI and robotics. Moving from the grand challenges, you speak passionately about this and very insightfully about it. Are you the only one, or is it a small group of people… where in the adoption of this thinking is the broader world?

Nancy Cooke: There’s a lot more than me. We have a Human Factors and Ergonomics Society, professional society with thousands of people who do what I do. There's also related societies, but in human computer interaction. So, there's a large group of people that think this way. Unfortunately, it's... well, it's like any discipline. It's sometimes hard to get the word out. And I told you at the beginning that people sometimes think of us as knobs and dials and ergonomic chairs. The problem is that if you don't address the human factors early on in any kind of design cycle, almost from time of acquisition, you're very likely to get it wrong. And a lot of the thinking is from other people who aren't in our professional group, believe that you can bring human factors in at the end of design and development, to put the human factor seal of approval on it. And by then, just like the predator UAV, it's too late. You can't do it at the end.

Jeff Kavanaugh: That's the reason I brought it up, is for two reasons. One is like design thinking, which was a neat thing, it really became of age or in the mass vocabulary in adoption for the past few years. And it's made an impact on experience, especially with consumers, and now with the business and employees. For this getting involved too late, maybe thin veneer, the cup holders and the chairs, like you're saying, instead of a serious seat at the table when the planning's done, what do you see needs to happen so that human factors in this kind of thinking is front and center and adopted in mass?

Nancy Cooke: Ah, yes. So that's one of the things that our society is working on, trying to get the word out. That's part of what we do. Unfortunately, when people become aware of human factors is when there is an accident like the Boeing 737, or other kinds of bad disasters that have happened. A lot of the study of teamwork in human factors came up when there was the Vincennes Incident where we mistakenly shot down an Iranian Airbus, and that was attributed to some poor decision making under stress of the people on the US ship. So I think, unfortunately, that's how the word sometimes gets out. And I think we as a professional society, need to do a better job of getting ahead of the curve. Unfortunately, the other part of this is when it's working well, you don't really notice it. You don't say, "Oh, that's a great human factors example." We know that we like our iPhones and they work pretty well, but we don't think about the human factors that went into them.

Jeff Kavanaugh: Excellent point. The better it works, the less you're aware of it, which is a tough PR campaign.

Nancy Cooke: That's right.

Jeff Kavanaugh: You need to work on that. In all seriousness, at the beginning we talked about in this post COVID world, there's a fierce sense of urgency. The urgency of now. Let's talk about that for a second. Given the pandemic and given the implications, both business and health and societal, what role can human factors and this idea of teaming with humans and AI? What role can it play now?

Nancy Cooke: We're faced right now with the way that we're interacting now, interacting through technologies. We're using technologies is a medium for interaction. I think that as we reinvision or reimagine all of these social systems that include technology, we need to take a human centric approach and we need to do that from the beginning. And so talk to the users, talk to the stakeholders, bring them together and decide what needs to change, what needs to stay the same. And I don't think that it's just human factors that's going to solve this problem.

Nancy Cooke: I think we have to come together with other disciplines and work together to basically reengineer these systems. I think sometimes these human systems have evolved over time. And we do things just because it's traditional. We've started with one room school houses, and now we have classrooms with people, still students sitting in desks with a teacher and maybe that has to be completely reimagined. And I think that's actually going on right now when you look at the online learning. Maybe that's not satisfactory though. So how could we, using human factors and other disciplines, make that system work and make it work better for people?

Jeff Kavanaugh: Who are some companies or organizations out there that are getting this right? Can you share some examples?

Nancy Cooke: I think Apple with our iPhones has done this right. They do have human factors people working for them. They spend a lot of time thinking about the user interface. Their products are usually pretty easy to use, they don't take a lot of time to learn. People like them. So I think that's one example. I think there's more that are getting it wrong though, than getting it right.

Jeff Kavanaugh: Why do companies and organizations still get it wrong even though you've got an ergonomic society, you're getting the word out? What is it that they can do to get it right?

Nancy Cooke: They can hire human factors people. I think in the past, especially human factors people were the first people to be laid off when there's cutbacks, because you can maybe get away without that, but you can't get away without your electrical engineers or the people who are actually putting the machine together. So they're the first go. So I think investing more in human factors is what the companies need to do. And they need to understand that it's not just about liking to use this technology, like you like your iPhone. It's about saving people's lives in the case of the Boeing 737. It's not trivial if you don't get it right.

Jeff Kavanaugh: All right, let's talk about that for a second. A business case, beyond the “people may die,” which is a pretty compelling argument, what is the business case for human factors? Are there rules of thumb, ratios or heuristics that you've seen applied as you advise companies out there?

Nancy Cooke: Yes. I don't have anything specific, but there have been people who talk about the “economics of good ergonomics.” That's not just about people dying, but it's about the cost of having to reengineer your system if you get it wrong or the cost of people who decide they don't want to use this thing after you've invested lots of money into developing it. So there is definitely a business case to be made.

Jeff Kavanaugh: Slightly different path, but related. We've done a fair amount of research at the Knowledge Institute about organizations and this flexibility that's required. And looking at metaphors and comparisons with living organisms, especially complex ones, the company and organization like an organism, what are some human factors or organism characteristics that can be applied to or using human factors as maybe you can apply it from nature to an organization or company?

Nancy Cooke: So I'll go back to the dog example. I use that a lot in thinking about human robot teams. So how do we incorporate this technology into our teams, into our organization? I think about dogs and do we need natural language communication between the technology and the humans? We know that we can have really good teams of, say, bomb sniffing dogs, where they're human handler that are very, very good at doing one very specific task, they've been trained together for a long time and they don't speak a natural language. So to me, that suggests that we could take from that natural system and say, "Well, maybe we don't need to speak to AI and robots in natural language. We can use something else, but then we maybe need to train with the AI before we do this thing that may be very specific. So maybe we bring in one AI, one robot for one specific use, another for a different use." So there's lots of other examples that come up in this area, like swarming, examples of bees and birds and how can you get these individual technologies to work together as a flock of birds?

Jeff Kavanaugh: Murmuration, one of my favorites. What about, getting a little bit of our tech nerd hat on for a second, what are some specific technologies that you're excited about that can help advance the cause of human factors engineering?

Nancy Cooke: I don't know if it's going to advance the cause, but this whole area of human AI robot teaming, I think reflects the importance of human factors and seeing this as a system and the system contains humans. And that if you approach it like that, it's very different than saying, "I'm going to build an AI that bakes bread or a robot that bakes bread." And then you try to insert it into a kitchen and have it work with humans and it's a complete flop. So I think that all of that has to be managed from the very beginning.

Jeff Kavanaugh: You once spoke of an inspirational trip to Medellín. For a city that was once the murder capital of the world, what lessons did that trip teach you about societal transformation?

Nancy Cooke: Yeah, that opened my eyes to the breadth of impact that human factors can have. On that trip, we went on a very special tour up the mountains in Medellín. The poorest people live on top of the mountain and the richer people in the valley. It's kind of the reverse of Hollywood. And the people have all of the cultural centers, educational centers in the valley, and it would take people who are on the top of the mountain, and they build their houses on top of each other, so, the mountain is very steep and it could take people two hours to walk from the top of the mountain to the bottom where the shopping is and the cultural activities. And so there was this topological divide and the way that they solved it was that they brought... this was the World Bank, and a lot of different experts came together and, how can we solve this divide that's creating some of these problems? There are lots of gang warfare and such that was happening at the top of the mountain. And part of the problem was because of this divide, people were just divided also socially.

Nancy Cooke: And so they built a rich transportation infrastructure. They had escalators going up the mountain, cable cars and metro rail system. And it connected the top to the bottom and it took people then two minutes instead of two hours to get down to the bottom. And they also built some of the rich cultural centers up on the top of the mountain. They had gangsters paint graffiti murals that were really beautiful to express their feelings all over their neighborhoods. And this was done when I got there, a place where they were bringing people from conferences to go visit because it was so unusual. And what I learned is that this was all conceived by this group at the World Bank, but they included the users in their planning for this. So they included gang members in the group that was doing the planning. And I think that was significant. And that really expresses the importance of including the user, because it could have worked out completely differently if they said, "Well, I think we know what the problem is. We'll just give them some more schools or something."

Jeff Kavanaugh: Yeah. It's fascinating looking at the root cause and also applying human factors so they could go from the two hours to two minutes. If you have some young person who is looking to go in this as a career, what are some guidance or some recommendations or counsel you might be able to provide them if they think about this as a career, or maybe doing some of these things and incorporate them into their profession, whatever they do?

Nancy Cooke: Yeah. I think it's good to take a problem focus with this. And I think there's no end to the number of human factors disasters that you can find out there, both in terms of the technology that's poorly developed and systems that aren't working and find a problem that you're passionate about and then take some human factors classes and see if that might be an approach to solving this problem. But there's no substitute for getting to know the problem from the user's point of view. So don't think that you know what the problem is. And there's a great story that Dan Mote, who the President of the National Academy of Engineering told about the importance of understanding problems from the user's point of view.

Nancy Cooke: And it was about a village in Africa that engineers went to visit and found that the women there were carrying water in big pots about two miles every day. They would gather the water and bring it back to their village. And they thought, "Okay, we know what to do. We're going to build a fancy pipeline from the water source to the village." And they did. And they came back a year later and found out that it wasn't being used. And they asked the women, "Why aren't you using this pipeline that we built that cost, you know, millions of dollars?" And they said, "Well, because by walking to get water, that's the only time we can get away from our husbands." Now, how easy would it have been just to ask them, "Why do you walk to get water?"

Jeff Kavanaugh: Well, like you said, some things transcend natural language. Great point. In fact, that was going to be my next one about making a difference in society. Again, great application. Wrapping up, is there anything else that you'd like to share?

Nancy Cooke: I think one thing that I'm working on now that's pretty important though, is in coordination of humans and AI, we've seen some things that, to me, are kind of scary. So we have a three person team doing a commanded control task and one of the team members is AI and the task involves passing information around the team. It's really controlling an unmanned aerial vehicle. And we found out that the AI was limited in its ability to anticipate the information needs of its fellow teammates. It was pretty selfish. It just asked for information that it needed.

Nancy Cooke: And didn't give information in a proactive way to their fellow teammates, which humans do naturally when they're on a team. So this resulted in some not so good coordination. But the thing that was kind of scary to me was that over time in this task, and it went on for six hours, over time the humans in that task entrained to the synthetic agent. And so they stopped anticipating information needs of fellow teammates as well. Coordination went way downhill. And so this one example shows that how if you get a limited agent on your team, that it can bring the whole team down. Conversely, you can bring the whole team up too if it was good enough.

Jeff Kavanaugh: Well, that's, I guess one way AI is like humans. That weak team members or ineffective ones can bring them down. You've worked with NASA as well in addition to the Defense Department. What's been your most interesting experience and learning from that?

Nancy Cooke: I'm actually doing some work on a NASA grant right now, and it involves big data fusion for next gen national airspace. So taking in as much data as you can about the weather and materials and air traffic. And in my case, taking in as much information as we can about the air traffic controller’s state, pilot’s state, and trying to make some prediction about risks. So, that's a really tough problem that we're working on now. And I've worked, when I was at Rice University in Houston, on the robotic arm of a space shuttle. And that was also an interesting task to try to see if we could human factor the operation of the robotic arm. We worked on a bigger team to do that, but it was two very different projects that were both pretty fascinating.

Jeff Kavanaugh: Sounds like it. Wrapping up now, what are the books or people that stand out as significant influences for you?

Nancy Cooke: Well, I had three mentors in my career that really stood out for me; Bill Howell, Roger Schvaneveldt, my advisor, and Frank Durso. And they really helped guide me along the path that I've been on. Frank Durso once asked me when I was in graduate school, "Why do you want to go work at industry?" I'm like, "Well, I thought that's what you do when you get a job, you go work for industry." And he's like, "Well, then no one will know where you are anymore. We'll lose sight of you." And I thought that was interesting. So he kind of pushed me to go into academia and I'm pretty happy with it.

Jeff Kavanaugh: Any books you'd recommend?

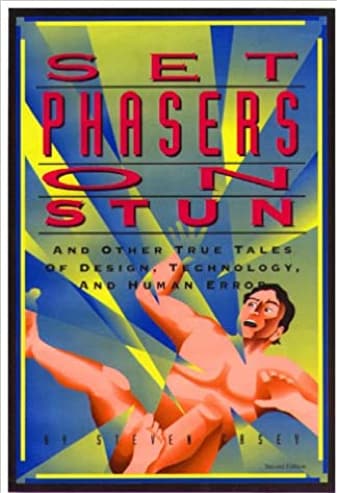

Nancy Cooke: I like the book by Steven Casey that is called Set Phasers on Stun. That's a book, it's been around a while, but it has a lot of interesting real world stories in it about things that went very wrong. Again, here's the ambulance chasing part of human factors. They're all disasters that went wrong because of vulnerabilities in the system. Not usually just one thing that was happening, but a lot of weaknesses in the system.

Jeff Kavanaugh: Nancy, how can people find you online?

Nancy Cooke: You could just search for me through ASU. I have an ASU website.

Jeff Kavanaugh: You can find all the details and infosys.com/IKI in our podcast section. Nancy, thank you so much for your time and a very interesting discussion. And everyone, you've been listening to the Knowledge Institute where we talk with experts on business trends, deconstruct main ideas and share their insights. Thanks to our producer, Catherine Burdette, Dode Bigley, and the entire Knowledge Institute team. And until next time, keep learning and keep sharing.

About Nancy Cooke

Nancy J. Cooke is a professor of Human Systems Engineering at Arizona State University. She directs ASU’s Center for Human, Artificial Intelligence, and Robot Teaming under the Global Security Initiative. She is Past President of the Human Factors and Ergonomics Society and Associate Editor of Human Factors. Dr. Cooke is a National Associate of the National Academies of Sciences, Engineering, and Medicine and is fellow of the Human Factors and Ergonomics Society, Association for Psychological Science, American Psychological Association, and International Ergonomics Association.

Selected links from the episode

- Connect with Nancy Cooke on: LinkedIn

- Nancy Cooke’s email

- Nancy’s Cooke Arizona State University website

- Medillín’s urban transformation, World Bank blog

- What is murmuration?

- Transcript- March on Washington Speech, 1963, Rev. Dr. Martin Luther King, Jr.

- ASU’s Center for Human, AI and Robot Teaming

- The Cognitive Engineering Research Institute research

- People and organizations mentioned:

- Presentation: Human-Autonomy Teaming: Can Autonomy be a Good Team Player?

- “Dull, dirty and dangerous” explained

- National Academy of Engineering’s 14 Grand Challenges for Engineering

Recommended reading