API, Microservices

As enterprises modernize their digital landscape, application programming interfaces (APIs) have become foundational building blocks. What began as a mechanism for system integration has evolved into a core construct for how organizations design applications, share capabilities, and scale innovation across cloud and hybrid environments. Today, APIs shape developer productivity, security posture, and business agility.

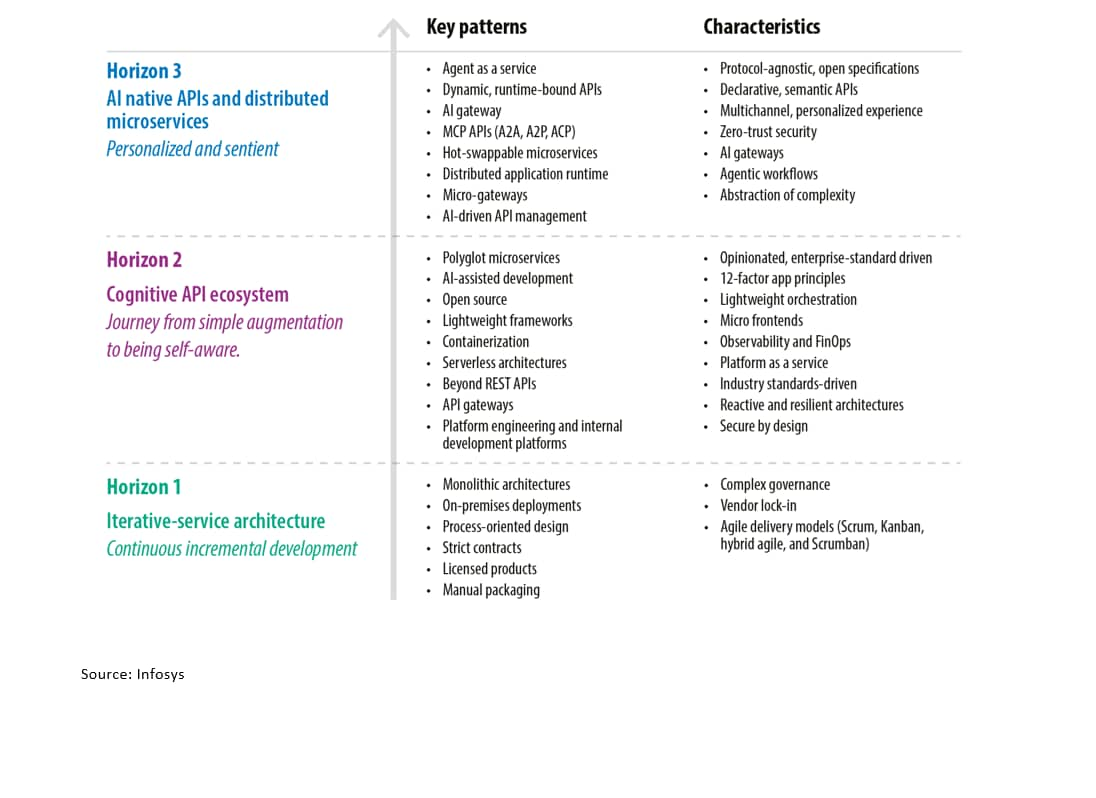

API evolution: From iteration to AI-native architecture

H3

AI native APIs and distributed microservices

Personalized and sentient

Key Patterns

- Agent as a service

- Dynamic, runtime-bound APIs

- AI gateway

- MCP APIs (A2A, A2P, ACP)

- Hot-swappable microservices

- Distributed application runtime

- Micro-gateways

- AI-driven API management

Characteristics

- Protocol-agnostic, open specifications

- Declarative, semantic APIs

- Multichannel, personalized experience

- Zero-trust security

- AI gateways

- Agentic workflows

- Abstraction of complexity

H2

Cognitive API ecosystem

Journey from simple augmentation to being self-aware.

Key Patterns

- Polyglot microservices

- AI-assisted development

- Open source

- Lightweight frameworks

- Containerization

- Serverless architectures

- Beyond REST APIs

- API gateways

- Platform engineering and internal development platforms

Characteristics

- Opinionated, enterprise-standard driven

- 12-factor app principles

- Lightweight orchestration

- Micro frontends

- Observability and FinOps

- Platform as a service

- Industry standards-driven

- Reactive and resilient architectures

- Secure by design

H1

Iterative-service architecture

Continuous incremental development

Key Patterns

- Monolithic architectures

- On-premises deployments

- Process-oriented design

- Strict contracts

- Licensed products

- Manual packaging

Characteristics

- Complex governance

- Vendor lock-in

- Agile delivery models (Scrum, Kanban, hybrid agile, and Scrumban)

Key trends across API subdomains

Trend 1

Serverless-first development enables autonomous team velocity

Serverless computing represents a fundamental shift in how enterprises approach application development and operational responsibility. Moving beyond infrastructure management, teams can now focus exclusively on business logic while cloud providers handle provisioning, scaling, and maintenance. The architectural paradigm abstracts away the complexity of server configuration and supports organizations to iterate faster, with 64% of enterprises reporting development cycle reductions between 35% and 40%, following serverless adoption. Deployment frequency increases dramatically by 71% on average, allowing teams to deliver features and fixes to production multiple times per day without manual infrastructure provisioning or scaling concerns.

Trend 2

Data mesh architecture unleashes federated data governance and organizational autonomy

Data mesh represents a paradigm shift from centralized data engineering and governance models toward domain-driven, decentralized data ownership where business domains take responsibility for their data as products. This architectural transformation addresses the critical bottleneck of centralized data teams by empowering distributed domain teams to autonomously curate, enrich, and publish data products while maintaining compliance with enterprise governance standards. Organizations implementing data mesh achieve a 30% reduction in data latency by eliminating handoffs between central data teams and domain consumers, accelerating time to insights and enabling data-driven decision-making at the speed of business.

Trend 3

Interest in Golang grows in lightweight microservices

The industry is shifting toward Golang for backend services. This trend is driven by the need for green computing and FinOps (cloud cost optimization). Unlike languages that require heavy startup resources, Go compiles to machine code with a negligible memory footprint. Its goroutines (lightweight threads) allow a single server to handle thousands of concurrent processes with minimal RAM. This shift is shaping a future where compute density is maximized. Enterprises can run more microservices on smaller, cheaper cluster instances, directly reducing infrastructure bills while improving scale and reliability for high-load applications.

Trend 4

A unified .NET platform supports cloud-ready and AI-enabled development

The fragmentation of the past (splitting .NET Framework, Core and Xamarin) is over. The industry is rapidly standardizing on a single .NET runtime (currently .NET 8/9/10) that delivers uniform behavior across cloud, desktop, and mobile. This unification provides a streamlined unified .NET SDK experience, where one set of tools builds everything from web frontends to high-performance background workers. A key driver here is .NET Aspire, an opinionated, cloud-ready stack that simplifies orchestrating distributed applications, handling observability, and wiring up dependencies like Redis or PostgreSQL automatically. The future is portable high performance: Developers can now ship self-contained, single-file microservices using Native ahead-of-time (AOT) compilation, which start instantly and deploy easily to Linux containers, leveraging HTTP/3 and faster base case library algorithms for maximum throughput.

Trend 5

Java advances toward a cloud-native and AI-first platform

Java is consolidating into a cohesive application platform centered on Java 25/26, Spring Boot 4.0, and Jakarta EE 11. This shift reduces long-standing complexity while enabling cloud-native execution, AI-native integration, and high-performance workloads, positioning Java for both enterprise modernization and next-generation application development.

At language and runtime levels, virtual threads (permanent since Java 21) support massive I/O concurrency using familiar blocking code, reducing reliance on reactive models. Combined with structured concurrency, they simplify safe parallel execution and are now viable for mainstream microservices and APIs through Spring Boot 3.2+.

Trend 6

Service mesh adoption transforms microservices security and observability

Service mesh architectures have emerged as a critical infrastructure layer for managing complex microservices ecosystems at enterprise scale. Istio, as the most prominent cloud-native computing foundation graduated service mesh implementation, addresses fundamental challenges including zero-trust security, advanced traffic management, and comprehensive observability without requiring application code changes. The architecture separates infrastructure concerns from business logic through sidecar proxies deployed alongside each microservice, enabling uniform policy enforcement across heterogeneous technology stacks. Organizations implementing service mesh report significant improvements in operational resilience, with automated traffic routing, health checks at the pod level, and seamless blue/green deployments that minimize downtime during frequent production releases.

Trend 7

Polyglot cloud-native frameworks revolutionize microservices development velocity

Modern polyglot Java frameworks including Quarkus, Micronaut, and Helidon have emerged as transformative technologies for cloud-native and serverless application development, addressing critical limitations of traditional enterprise Java stacks. Quarkus achieves millisecond startup times through compile-time dependency injection, classpath trimming, and AOT compilation via GraalVM, enabling seamless deployment to serverless platforms like AWS Lambda and Azure Functions without cold-start performance penalties typical of traditional JVM applications. The framework's reactive programming model supports nonblocking I/O operations and concurrent session handling at massive scale, critical requirements for modern microservices handling fluctuating traffic patterns, and resource constraints of containerized environments.

Trend 8

Agent-ready API enablement becomes mainstream

Agent-ready API enablement has recently evolved into a more structured and scalable approach for connecting AI agents with backend services. A key enabler is OpenAPI Toolset (part of Google Agent Development Kit/ADK), which can automatically transform a standard OpenAPI specification into callable tools (e.g., RestApiTool) that AI agents can invoke directly, removing the need for manual wrapper coding. On the GraphQL side, Apollo MCP Server has matured into a robust production-grade solution for exposing GraphQL operations as agent-consumable tools under the MCP standard. GraphQL queries and mutations defined in the schema or persisted-query manifests are automatically available to any MCP-capable client, giving agents structured access to data and business logic without bespoke integration. Beyond these, newer frameworks are emerging that further streamline agent-to-API workflows. For example, tools like FastAPI-MCP enable Python-based REST services to be exposed as MCP servers with minimal configuration, making them instantly usable by agents. There is also growing emphasis on dynamic tool discovery and selection, as demonstrated by frameworks such as ScaleMCP, which allows agents to retrieve and register tools at runtime, reducing overhead and avoiding redundant tool-repository maintenance.

Trend 9

Observability expands to support AI-driven and agentic systems

The rise of AI-powered applications and agentic workflows has driven observability platforms to evolve far beyond traditional APM. Modern AI observability tooling now captures not only infrastructure metrics but also prompt-level traces, token usage, model invocation latency, error rates, and downstream embedding drift or safety signals. For instance, Amazon CloudWatch now offers a dedicated generative AI observability capability that delivers out-of-the-box dashboards tracking latency, token consumption, errors and model usage — and crucially, supports end-to-end prompt tracing across models, knowledge bases, tools, and agent workloads. Beyond CloudWatch, observability is increasingly standardized around OpenTelemetry (OTel), now extended with generative AI semantic conventions and instrumentation libraries that automatically capture telemetry for LLM-based applications — including prompts, completions, tool calls, token counts, and cost metrics. Vendors such as IBM Instana have released generative AI observability sensors that leverage OTel to instrument full AI stacks (models, agents, runtimes, infrastructure), offering integrated tracing, performance monitoring, alerting, and cost/resource analytics. These developments facilitate organizations to deploy generative AI and agentic systems in production with high confidence in reliability, observability, and operational governance.

Trend 10

API-driven automation reshapes infrastructure delivery

Organizations are increasingly building internal PaaS-style platforms that expose APIs for development, continuous integration and continuous delivery/deployment (CI/CD), and infrastructure orchestration. These internal marketplaces provide a simplified, often opinionated abstraction over cloud or third-party SaaS services, while giving the organization control and insights into the use of these services, which can be critical when dealing with security vulnerabilities and compliance aspects.

Trend 11

Edge clusters mature into cloud-native runtimes

Edge clusters are maturing into lightweight, cloud-native runtimes. Organizations run small Kubernetes flavors (k3s and the like) or purpose-built orchestrators onsite to host containerized microservices close to users and devices. This improves millisecond-level responsiveness while keeping central control planes. Functions at the edge-driven and event-driven microservices let teams push only the minimal processing to edge clusters, lowering cold-start costs with prewarmed runtimes and speeding per request latencies for APIs. Frameworks and platforms catering to this space are provided by both major cloud providers (AWS IoT Greengrass, Azure IoT Edge) and the open-source space (Kubeless, Apache OpenWhisk, FaaS, Knative).

What's New

Latest White Papers, Tech Blogs and View Points

Subscribe

To keep yourself updated on the latest technology and industry trends subscribe to the Infosys Knowledge Institute's publications

Count me in!